Fom an international cyber safety perspective, we will be living in “interesting times” over the next few years.

Over the past decade, there has been a significant rise in pressure from international organisations for the regulation of social media and the ethical design of those environments.

Australia’s very own eSafety Commissioner and the European Commission have become world leaders in pressuring Big Tech towards the safety-by-design principle of their networks.

That pressure has included billions of dollars’ worth of fines over the past decade for breaches of international law or the non-removal of harmful content.

The Act to protect our children

Now, from 10 December 2025, children under 16 in Australia can’t continue to operate a current social media account or make a new account on many social media networks, because of the Online Safety Amendment (Social Media Minimum Age) Act 2024 coming into force.

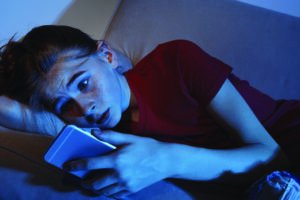

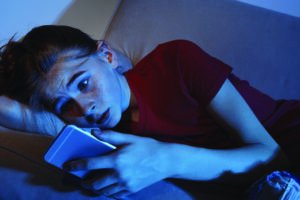

The Act was legislated to help protect young people from aspects of the platforms that try to keep them scrolling for too long, and from content that can affect their safety, health and wellbeing.

It’s important to view this law not as a ban, but as a delay to help children under 16 build skills and awareness about the risks of technology.

The legislation is aimed at any service or environment that meets the definition of a “social media platform”. The platforms currently targeted by the law are Facebook, Instagram, Snapchat, TikTok, X, YouTube, Reddit, Kick and Twitch.

The networks currently included are likely to grow or change in the coming months as Australia’s eSafety Commissioner, Julie Inman Grant, makes decisions on which platforms might fall under that definition.

At this stage, the excluded environments are messaging apps (WhatsApp, Messenger, Signal, Telegram, etc), email services, voice/video calling apps (Zoom, Teams, FaceTime, etc), online games (Fortnite, Roblox, Minecraft, etc), and professional networking (LinkedIn).

The key component of the legislation is the requirement for declared social media networks to take “reasonable steps” to ensure under-16s in Australia are removed from their platform within a reasonable amount of time once an underage account is identified, and to prevent a child from creating a new account before they turn 16.

The eSafety Commissioner has published regulatory guidance describing examples of “reasonable steps” platforms can take to identify underage accounts – such as age verification or estimation tools, parental-control options, and better detection options.

If the networks fail to take these actions, they can be fined up to $49.5 million.

The legislation will have to rely on cooperation from the platforms, whose response will be based on their own interpretation of the legislation – especially the “reasonable steps” requirement. Excuse the cynicism, but I’m not very confident about what they will deem to be “reasonable”.

I’m strongly of the opinion they will argue that trying to actively remove all Australian children under 16 from their environments is not reasonable.

I believe they will introduce detection methods that will pick up on the most basic under-16 identifiers. They might also make a few alterations to code for Australian accounts, or design a specific algorithm to pick up kids who’ve added their date of birth to an account or recently changed the date; users who have a daily location at an Australian school; what the ages of most of their “friends” are; and the nature of the websites they’re visiting.

Based on these very simple steps, the networks might well remove 100,000 Aussie kids. They could deem that to be “reasonable” under the law, despite Australia having about two million children aged 10 to 15!

I expect the networks will also rely heavily on parents, schools and the eSafety Commission reporting underage accounts and essentially doing the work for them.

As he told a US Senate Hearing into Child Online Safety in 2024: “I do not support the conclusion that social media causes changes in adolescent mental health.”

As he told a US Senate Hearing into Child Online Safety in 2024: “I do not support the conclusion that social media causes changes in adolescent mental health.”